SEO in NLG Projects

The quality of online text must be judged not only in terms of its relevance to readers but also in how it is adapted to the requirements of search engines.

From an SEO point of view, the same rules apply to generated texts as to texts that are written by humans, but due to the different production environments, there are some specifics in NLG projects that need to be taken into account.

What to Expect From This Guide?

In this guide, you will learn how you can improve your Google ranking through text automation, and which SEO actions you can implement in your own website with the AX platform.

Knowledge about SEO content is included, as far as it concerns text automation. But the guide does not replace the preoccupation with the developments and requirements of search engine optimization in a broader context.

Why the AX platform is also an SEO-Tool in E-Commerce

Individual and customized content is an important factor for ranking in the organic search.

Although search engine optimization affects many areas, such as the user experience on the website, the time it takes for the website to load, or the number and quality of backlinks, the overall question for the search engines remains how to bring the right content to the users: search engines are interested in presenting their users matching content for their search. So matching quality content is essential for SEO.

You will meet these requirements for onsite SEO with the right use of the AX platform, with which you can improve your Google ranking by many relevant factors:

- First, simply by providing content at all, i.e. product descriptions. Textimportant for search engines because that’s what they understand: For humans pictures can speak a thousand words, but search engines cannot see images. So make sure to add a description to each product.

- The platform allows variance of texts - This means that there is no longer a risk of being penalized in the ranking because of duplicate content. In mIn most cases, duplicate contenta result of using the manufacturer's content for your website.

- Customizing your content to the individual product will lead to higher relevance of your content for search engines and customers. This also applies to matching your content to different user interests.

- Content freshness is a decisive ranking factor. With the AX platforplatform, the content will be or even automatically updated.

- With the implementation of additional information (e.g. meta tags) for search engines the ranking will also be improved. You can generate meta tags automatically fitting every of your product description or webpage.

- You can control the use and distribution of chosen keywords in your content.

Use Content Freshness as a Ranking Factor

What happens with content over time? Content gets outdated because changes in the product and in the world will inevitably occur, planned or unplanned.

Over time, the gap between the static content you have written once and the evolving reality becomes more obvious:

- Initially minor discrepancies, resulting in significant errors in the course of time.

- Content loses more and more of it's validity and relevance.

- Content is no longer appealing and attractive!

According to this, content that is updated recently must rank higher than stale content. But a SEO study (by SEO tool developer Ahrefs on the base of 2 million random keywords) showed that only 1-2% of the pages on the Google position No.1 are less than one year old. The average top ten ranking page is more than two years old. So the SERP is clearly dominated by old pages.

This observation demonstrates that search engines are confronted with a dilemma when presenting their users high quality content on the first positions. On the one hand you have the decline of content, and on the other hand content authority can only be built up over time. If content is sustainable and lasting, the searchers assume quality.

| Evergreen content | Fresh content |

|---|---|

| Well-aged, many backlinks (takes time), established, proven relevant for the issue, sustainable and lasting | Up to date relevant to the reader |

| Examples for issues: "the anatomy of the human brain" | Examples for issues: "The Top 10 Smartphones" |

Thus, you have to distinguish the actual needs of the user. For this Google invented the QDF (= Query Deserves Freshness) algorithm. That mathematical model tries to determine when users want new information and when they don't. For this Google observes blogs, magazines, news portals, and search queries to detect "hot" topics. If the QDF is above average fresh content ranks higher than established content.

There are usually three categories with increased requirements of fresh content:

- The mentioned "hot" topics or recent events ("Taylor Swift boyfriend").

- Regularly recurring events ("olympics").

- Frequent updates That means for issues that need regular update because there are frequent new information. For example, if you’re researching the *best smartphone cameras, or you’re in the market for a new car and want jeep compass reviews, you probably want the most up to date information.

For working with the AX platform this leads to two conclusions:

- The type of content that is most suitable to text generation is directly linked to the category "frequent updates". For this you will automatically have an advantage over handmade text production, because your texts will get updates as soon as the data changes.

- You can use text generation to be prepared to provide content for the second category "recurring events" or link your content to recurring events to benefit from the ranking factor "freshness".

Duplicate Content - Disadvantage in Ranking?

In SEO terms, duplicate content is an often discussed problem. In general duplicate content is understood as content that appears on more than one webpage. These different webpages can be on one domain (this is called internal duplicate content) or are on different domains (external duplicate content). According to Google, duplicate content occurs when content either " (..) completely match other content or are appreciably similar.".

External Duplicate content

External duplicate content is created when the same content is published on multiple websites, or text is copied from other websites. In e-commerce, this often happens when the manufacturers' descriptions are used in online stores, instead of their own product descriptions. The manufacturers' descriptions are also used in other places on the net, so Google actually classifies them as not relevant, because they do not provide the user with any added value, they are categorized as just useless or even harmful copies:

“Where is my value add? What does my site that doesn’t have original content add compared to these other hundreds of sites? So whenever possible I urge you to try to have original content, try to have a really unique value. If not there’s no reason why anybody wants stumble on your site. So try to have an unique angle and not the same stuff that everybody else has on their webpage as well." (Matt Cutts, former head of Search Quality Team at Google)

Internal Duplicate Content

Internal duplicate content within a domain, seem not to make big impact on search engines. It appears when the same content is displayed within different context, for instant on the product, category or filter pages. Internal duplicate content is produced for various reasons, for example automatically by the CMS, which puts the same pages in different navigation points, by the same category texts or filter functions.

WARNING

But also by sorting a product into several store categories, store systems then often generate different URLs for one and the same product, such as:

- www.myshop.com/gardening-supplies/chainsaw-stihl.html

- www.myshop.com/professional-tools/chainsaw-stihl.html

- www.myshop.com/stihl/chainsaw-stihl.html

Using Variance Tools for Internal Duplicate Content

The experts from Google Webmaster Central are aware of this way of producing duplicate content, but they also give an alternative example of how to avoid duplication. And this reads like a manual for automated text production: unique output for each dataset.

“Minimize similar content: If you have many pages that are similar,(...) For instance, if you have a travel site with separate pages for two cities, but the same information on both pages, you could either merge the pages into one page about both cities or you could expand each page to contain unique content about each city.”>

To keep your rate of intern duplicate content low is simple with an NLG project. The basic rule is to bring variance into your NLG project in as many different ways as possible. A number of texts are variant if they differ in their choice of words, train of thought, optical appearance or message.

So These are Some Suggestions for Increasing Variance Within Your NLG Project:

- Use as many data fields as possible for your text. As the content changes, so does the resulting text.

- Create multiple stories for your content. They are used to turn statements on or off, and change their order.

- Use branches for creating variance on statement and word level (synonyms).

Identifying the Search Intent

As has already been pointed out, that the key message for optimizing content for search engines is: Focus on relevance for the user/reader! To be able to implement this, it is not enough to know the user's behavior and the interest on your website. Rather, it is necessary to know how the user behaves when he searches on the search engine page.

So, search engine optimization isn’t possible without knowing how people search things on the internet. One important aspect of SEO is to answer the questions people put into the search field of Google - and to make it very clear that your answer is the best one for their question.

For this you have to know what people search on Google and what they expect to find with their searches. This means that you have to understand the intent behind the search queries.

| Search intent type | What are they looking for? | What do they put in the search field? |

| Information | They want to get some facts or information about a topic, a problem or issue. | "Clean oven", "best time to sow lettuce", "How to..." |

| Navigation | They want to get to a website. | "Facebook", "BBC" |

| Transaction | People want to buy things online and are looking for a good offer. | "Best price smartphone", "buy sofa" |

| Commercial | People want to buy some things but not now, they want to make some investigations before. (somewhere between informational and transactional intent.) | "Best camera in smartphone","Galaxy Samsung S10" |

With this concept, you can write your content more precisely, tailored to your user's interest and you can give the information the user wants to have, according to his search intent.

First, in your content strategy you have to decide for which of these categories you want to provide answers. For e-commerce companies and online shops the transactional and commercial search intents are the most important ones, but also delivering information about certain issues that are close to your products can be promising.

Second, you need to distribute these content building blocks across your websites in a purposeful way. The search intent should not only be considered in the body text or the headlines on the website, but also in other more hidden places, such as the naming of webpages and especially in the meta tags, i.e. in the places where information about the website is provided.

Meta Tags and Title Tags on the NLG Platform

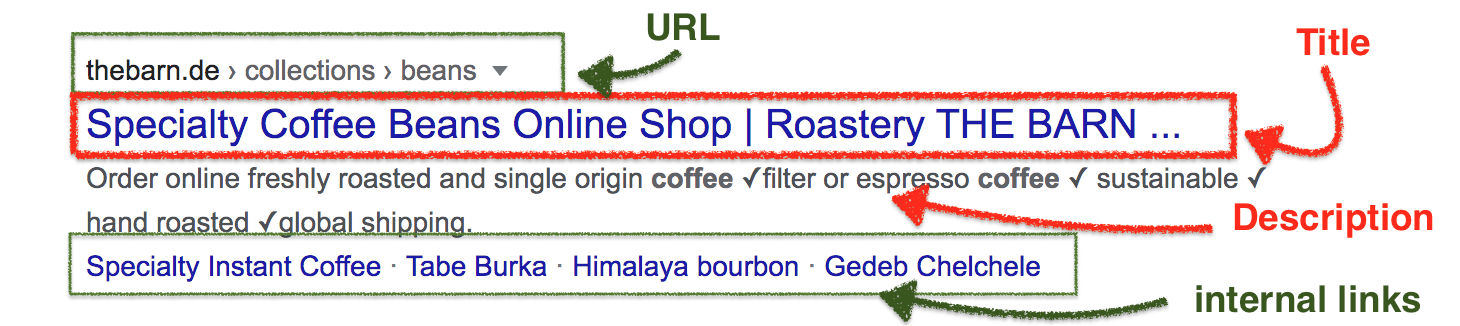

Anatomy of the Search Engine Result Page (SERP)

The quality of this snippet decides whether the user feels that the page matches his search intent, then he clicks on it or not. At this point, it is not only about occupying one of the top ranks on the search engine result page (SERP) but also about showing that your website is relevant for the user.

The content the users sees on the SERP usually originates from the meta-tags of the webpage

| URL/internal links | Clear structure of your website and apt naming of the different categories are useful. |

| Meta-Tag Description | Does not affect ranking. Should be around 140-160 characters (for mobile search 100-120 characters). Make your keywords in this meta-tag bold. |

| Meta-Tag Title | Should be around 65 characters. Put the keyword as far to the beginning as possible. Respond to the search intent (more details to the title-tag in the next chapter). |

Managing Meta Data

It is recommended to optimize at least the descriptions and titles for your most relevant webpages and keywords. You can generate your meta-tags on the platform in two ways:

1. Keep the meta-tags in the project

You can insert and name the parts of your content that will have the functions of meta-tags inside your text project. This has the benefit of maintaining your body text and meta data in one go.

How to: Explicitly indicate to the software, the limits of the meta tags using, for instance: ###METATAGSSTART###the tags##METATAGSEND### (this will be trimmed in your API connection).

Then the meta tags will be rendered before the text, in the same text production.

WARNING

But this approach requires that you use HTML on the whole project instead of markdown. Additionally the text has to be passed on to a different data field of your API (content instead of content_html). Read Markdown and the API for more details.

2. Accompanying Meta Tag Projects

Create a Meta-Tag Project for each of your text projects. So you can manage your text project as usual and keep the meta tags generation separated.

Optimize Your Title-Tag

The title tag is one of the most important on-page factors in SEO but it is also the most prominent place on the SERP. In addition, browsers use title tags in browser tabs and when bookmarking pages. In social media, title tags are used as headline when a URL is shared for, and in the case e.g. TwitterCards is missing.

Basics of a commercial/transactional title-tags. It's supposed to be in there:

- Answer the question as briefly and precisely as possible: What is this page about?

- Use your main keyword (or the crucial details) at the beginning of the title tag. Think about using brand names in the title tag, as well.

- Name your best points referring to the search intent: price, discounts, number of products ("displays a great range of products"), being up-to-date (summer 2020 collection), and target group (professionals).

Small SEO Glossary

- Onsite Optimization: optimizing elements for better ranking on the website. Offsite optimization is focused on the ranking factors outside the website, e.g.links.

- Duplicate content: content that is found within more than one URL.

- SERP search engine result pages.

- Meta tags: areas in HTML code that contain information about a webpage.

- Description (meta-tag): a html element that describes the content of a page in one or two sentences.

- Title tag: is a required html element, sometimes called SEO title - not to be confused with the meta tag which is named title.

What's Next?

You can learn more about the topic "How can I increase the variance of my texts?" in Peter's webinar.